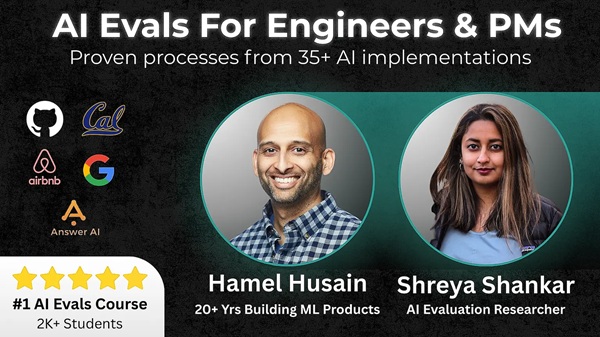

[GroupBuy] AI Evals For Engineers & PMs – Hamel Husain & Shreya Shankar

$3,000.00 Original price was: $3,000.00.$320.00Current price is: $320.00.

This article delves into the world of AI evaluation, drawing insights from the “AI Evals For Engineers & PMs” course, and explores diverse applications, including how these principles resonate in understanding ClearChoice reviews, academic curricula like a stanford university syllabus, professional qualifications such as enrolled agent study materials, and various evaluation scenarios across different sectors.

Table of Contents

Clearchoice Reviews

Understanding customer experiences and outcomes is critical for any organization, and ClearChoice reviews offer a window into patient satisfaction and the effectiveness of their services. Evaluating AI systems shares a similar goal: ensuring they deliver intended results and meet user expectations. The core principles of data-driven evaluation, as emphasized in the “AI Evals For Engineers & PMs” course, can be applied to analyze and interpret customer feedback, turning qualitative reviews into actionable insights.

The Power of Data-Driven Review Analysis

Organizations like ClearChoice can leverage data-driven techniques to systematically analyze customer reviews. This involves not just reading the reviews but also categorizing them, identifying key themes, and quantifying sentiment. By applying natural language processing (NLP) techniques, businesses can automate the process of extracting valuable insights from large volumes of textual data, which becomes essential to improving service quality and addressing customer concerns.

The connection between AI evaluation and ClearChoice reviews lies in identifying the common threads of successful implementation. In both scenarios, setting clear metrics for success is critical, and data analysis can then show areas needing improvement. Quantifying sentiment and identifying frequently used terms, one can transform subjective feedback into tangible data points that guide businesses to make appropriate adjustments.

The same principles used to evaluate the performance of AI models can be applied to customer reviews. For example, error tracking can be useful. When customers are dissatisfied with services, error tracking can quickly identify the cause of the issue.

From Vibe Checks to Quantifiable Metrics

The AI Evals course emphasizes moving beyond “vibe checks” and subjective assessments to data-driven measurements. This resonates strongly with the need for ClearChoice and similar organizations to establish concrete metrics for evaluating customer satisfaction. Rather than relying solely on anecdotal evidence, companies can employ sentiment analysis tools, track the frequency of specific keywords related to positive or negative experiences, and monitor trends in customer ratings.

This data-driven approach allows ClearChoice to identify areas where they are excelling and areas that need improvement. For example, if a large number of reviews mention the word “pain” negatively or “comfort” positively, the company can concentrate its analysis on analyzing how customer management can improve experiences.

The shift towards the data-driven approach ensures that decision-making is informed by evidence rather than guesswork, leading to more effective strategies for enhancing customer satisfaction and business performance.

Actionable Insights for Continuous Improvement

By applying a robust evaluation framework to ClearChoice reviews, organizations can translate customer feedback into actionable insights. This involves identifying patterns, understanding the root causes of issues, and developing targeted interventions to address specific pain points. The ultimate goal is to create a feedback loop where customer reviews continuously inform and improve the quality of services.

Creating a data flywheel ensures ongoing enhancements. This starts with gathering reviews, analyzing data to find key areas for improvement, implementing any necessary change, and then monitoring new reviews to evaluate the impact of those changes. This iterative approach helps businesses refine their strategies and processes over time, ensuring long-term success

This process aligns with the Continuous Integration/Continuous Deployment (CI/CD) principles advocated in the AI Evals course, which emphasize the importance of ongoing monitoring, evaluation, and optimization in AI systems. This continuous improvement cycle ensures that AI models, like customer service strategies, remain aligned with evolving user needs and expectations.

Stanford University Syllabus

A stanford university syllabus represents a structured framework for learning, outlining the course’s objectives, content, assessments, and expectations. Just as AI systems require rigorous evaluation, a syllabus benefits from continuous assessment and refinement to ensure it effectively meets the needs of students and facilitates the desired learning outcomes. The principles of AI evaluation can inform the design and improvement of academic curricula.

Aligning Learning Objectives with Assessment Metrics

The “AI AI Evals For Engineers & PMs” course emphasizes the importance of aligning system goals with evaluation metrics. Similarly, a stanford university syllabus should clearly define the learning objectives and map them to corresponding assessment methods. This ensures that students are evaluated based on their mastery of the intended skills and knowledge.

An effective alignment makes evaluations more meaningful and relevant. For instance, if a course aims to develop critical thinking skills, discussions, debates, and essay assignments should evaluate a student’s ability to assess, analyze, and synthesize information.

Evaluation and assessment should be able to drive a syllabus’s focus and impact. The more useful and helpful evaluations are, the more the syllabus should be geared to optimize success in these areas.

Iterating on Syllabus Design Based on Feedback

The AI Evals course advocates for a lifecycle approach to evaluation, emphasizing the importance of continuous monitoring and improvement. This concept can be applied to syllabus design by soliciting feedback from students and instructors, analyzing the results, and iterating on the curriculum to address any gaps or shortcomings.

This iterative process can involve gathering feedback through surveys, focus groups, or informal discussions. By analyzing this feedback, instructors can identify areas where the syllabus can be improved, such as clarifying learning objectives, refining assessment methods, or adjusting the course content.

In today’s world, it is important to assess the effectiveness as well as the relevance of the syllabus. For instructors and students, they should both consider the syllabus. Has the syllabus adapted for changes in society? The current economy? New discoveries made and adapted for changes within the community?

Creating Targeted Assessments for Learning Outcomes

AI evaluation involves tailoring evaluation strategies to specific architectures and use cases. Similarly, a stanford university syllabus should incorporate a variety of assessment methods designed to measure different learning outcomes. This may include exams, quizzes, projects, presentations, and class participation.

The key is to select assessment methods that are aligned with the learning objectives and provide students with opportunities to demonstrate their understanding of the material. For example, if a course focuses on practical skills, hands-on projects and simulations may be appropriate, while courses emphasizing theoretical knowledge may rely more heavily on exams and essays.

This includes being sure to consider the backgrounds of the students and the potential obstacles they may face. Make adjustments to assessment methods accordingly to ensure a wider range of students are able to adequately and successfully complete the course.

Enrolled Agent Study Materials

Preparing for the Enrolled Agent (EA) exam requires comprehensive enrolled agent study materials that cover all relevant tax laws, regulations, and procedures. The evaluation principles learned in the AI Evals course can be applied to assess the quality and effectiveness of these study materials, ensuring they are up-to-date, accurate, and conducive to successful exam preparation.

Ensuring Accuracy and Completeness

In AI evaluation, ensuring data integrity is paramount. Similarly, enrolled agent study materials must be meticulously checked for accuracy and completeness. Tax laws and regulations frequently change, so the materials must be regularly updated to reflect the latest changes.

Review and analysis of these study materials should be a regular practice. A cross-check with official IRS publications and other reliable sources can help identify and correct errors or omissions, ensuring that students are receiving accurate information.

The material should include the latest tax law, regulations, and procedures. Students should always be cautious when studying because information is always changing, and they need to be sure the information they’re studying is correct.

Incorporating Practical Examples and Scenarios

The AI Evals course emphasizes hands-on exercises and real-world examples. Likewise, effective enrolled agent study materials should incorporate practical examples and scenarios that allow students to apply their knowledge to real-world tax situations.

These examples can help students develop a deeper understanding of the material and improve their ability to solve complex tax problems. Case studies, simulations, and practice exams can provide valuable opportunities for students to test their knowledge and skills.

The more practice examples are included in the study materials, the more helpful they are to students. Real-world scenarios are particularly important as they give the students the experience of working through these situations themselves.

Providing Clear and Concise Explanations

The clarity and conciseness of explanations are crucial for effective learning. Enrolled agent study materials should be written in a clear, straightforward style that is easy for students to understand. Technical jargon should be minimized, and complex concepts should be explained in simple terms.

Diagrams, flowcharts, and other visual aids can further enhance understanding and retention. By presenting information in a clear and concise manner, study materials can help students grasp complex tax concepts more easily.

In addition, students may find it helpful for the study materials to include questions and answers that allow them to check their knowledge. By checking the questions, students gain a better assessment of what they understand and what they still need to study.

Howard Gates Judge

The role of howard gates judge, or any judicial figure, involves evaluating evidence, interpreting laws, and making impartial decisions. While seemingly disparate from AI eval, the underlying principle of unbiased assessment and objective judgment is crucial in both contexts. The rigorous process of evaluating AI systems can draw parallels with the judicial process.

Objectivity in Evaluation

The “AI Evals For Engineers & PMs” course stresses the importance of removing bias from AI evaluation. Similarly, a howard gates judge must strive for objectivity in their decision-making, setting aside personal beliefs or prejudices.

In both AI evaluation and the judicial process, objectivity can be enhanced by establishing clear criteria, relying on empirical evidence, and seeking input from multiple perspectives. This helps to ensure that decisions are based on facts and evidence rather than subjective opinions.

This emphasizes the importance of making an accurate, truthful, and fair judgement.

Adapting to Evolving Standards

AI technology is constantly evolving, requiring continuous evaluation to keep pace with new developments. Similarly, a howard gates judge must stay informed about changes in laws and regulations to ensure their decisions remain current and relevant.

Continuous learning and professional development are essential for both AI professionals and judicial figures. This includes staying abreast of new technologies, legal precedents, and evolving ethical standards.

The capacity to adapt to evolving standards is critical for both AI technology and the legal system. Society is constantly changing, as is technology. A judge must always be alert and aware to adapt the legal system accordingly.

The Value of Transparency

Transparency is a key principle in both AI evaluation and the judicial system. Making the evaluation process transparent ensures accountability and helps build trust among stakeholders. Similarly, open court proceedings and published judicial opinions promote transparency and accountability in the legal system.

This includes providing clear explanations of the reasoning behind decisions, allowing others to scrutinize the process and identify potential flaws. Transparency helps to foster trust and confidence in the fairness and integrity of both AI systems and the judicial process.

This includes being able to provide the reasons for their decisions and the processes they used to come to their decisions.

Centric Routing Number

A centric routing number is a unique identifier for a financial institution, facilitating secure and accurate electronic transactions. While seemingly unrelated to AI evaluation, the concept of accurate identification and routing aligns with the need for precise data handling and reliable performance in AI systems. AI eval techniques can be applied to improve the efficiency and security of financial systems.

Efficiency in Data Transmission

The AI Evals course highlights the importance of optimizing data pipelines for efficient processing. Similarly, a centric routing number ensures that financial transactions are routed efficiently and accurately to the correct bank.

Efficient data transmission is critical for both AI systems and financial transactions. Optimizing data pipelines, reducing latency, and minimizing errors are essential for ensuring reliable performance and preventing disruptions.

Financial analysts, for example, can apply the AI evaluation to enhance their current evaluation system. Current evaluation systems, while robust, would further benefit from incorporating modern eval software.

Security in Transaction Handling

Security is paramount in both AI systems and financial transactions. A centric routing number is used to securely identify financial institutions and prevent fraud. The AI Evals course emphasizes the importance of implementing security measures to protect AI systems from unauthorized access and cyber threats.

Strong authentication protocols, encryption, and intrusion detection systems are essential for ensuring the security and integrity of both AI systems and financial transactions. Regular security audits and vulnerability assessments can help identify and address potential weaknesses.

The process of assessing an institution, for example, can be enhanced by incorporating eval software. Doing so ensures that the information is safe and protected from a wide range of external sources.

The Importance of Standardization

Standardization is crucial for interoperability and reliability in both AI systems and financial systems. A centric routing number follows a standardized format, ensuring that it can be recognized and processed by all financial institutions.

Similarly, the AI Evals course advocates for the use of standardized evaluation metrics and methodologies to enable consistent and comparable assessments of AI systems. Standardization promotes interoperability, reduces errors, and facilitates collaboration.

The importance of standardization in both systems highlights the importance of consistency and reliability. By adopting standard models, systems can perform more seamlessly, ensure safety, and protect stakeholders from a wide variety of different risks.

Airbnb Host Review Examples

Airbnb host review examples provide valuable feedback on the quality of accommodation and hospitality services. Analyzing these reviews using the principles of AI evaluation can offer actionable insights for hosts to improve their offerings and enhance guest satisfaction.

Identifying Key Themes in Guest Feedback

The AI Evals course emphasizes the importance of identifying key themes and patterns in data. Similarly, analyzing airbnb host review examples involves identifying recurring themes related to cleanliness, amenities, communication, and overall experience.

By categorizing reviews based on these key themes, hosts can gain a better understanding of what guests value most and where improvements may be needed. Sentiment analysis techniques can be used to gauge the overall sentiment expressed in the reviews and identify specific areas of concern.

Sentiment review and analysis can be streamlined by using an eval helper. An eval helper is intended to assist in streamlining this entire analysis by providing the appropriate software and tools.

Leveraging Data for Service Improvement

The AI Evals course advocates for using data to drive continuous improvement. Likewise, airbnb host review examples can be a valuable source of data for hosts to identify areas where they can enhance their services and improve guest satisfaction.

For example, if multiple reviews mention issues with the Wi-Fi connectivity, the host can investigate and address the problem. Similarly, if guests consistently praise the cleanliness of the space, the host can maintain those standards and highlight them in their listing.

In addition, by using the eval helper, an eval helper, a wide range of Airbnb hosts can improve their success over time. Hosts will know exactly where they need to improve and what areas they are already successful in.

Benchmarking Performance Against Competitors

AI evaluation often involves comparing the performance of different systems. Similarly, airbnb host review examples can be used to benchmark a host’s performance against competitors in the same area.

By analyzing reviews of similar listings, hosts can identify best practices and areas where they can differentiate themselves. This competitive analysis can help hosts fine-tune their offerings and attract more guests.

It would be particularly useful to see if you can use the eval helper, an eval helper, to see what the benchmarks are within a specific city, neighborhood, and location.

Product Evaluation

Product evaluation is a critical process for assessing the quality, functionality, and usability of a product. The principles of AI evaluation, with their emphasis on data-driven assessment and iterative improvement, can be directly applied to enhance the effectiveness of product evaluation processes.

Defining Clear Evaluation Metrics

Just as AI systems require clear evaluation metrics, product evaluation must start with defining specific and measurable criteria for assessing the product’s performance. These metrics may include functionality, usability, reliability, security, and performance.

By establishing clear evaluation metrics, product teams can ensure that they are evaluating the product based on objective criteria rather than subjective opinions. This helps to identify areas where the product excels and areas where improvements are needed.

In addition, these objective measures should be evaluated using an eval software. An eval software can measure various processes and help product teams more objectively define specific measures and criteria.

Incorporating User Feedback

The AI Evals course highlights the importance of incorporating user feedback into the evaluation process. Similarly, product evaluation should involve collecting feedback from users through surveys, interviews, usability testing, and beta programs.

User feedback provides valuable insights into how users interact with the product, what they like and dislike, and what features they would like to see added. This feedback can be used to identify areas where the product needs to be improved to better meet user needs.

For product testing, it is critical to include users of varying backgrounds, demographics, and experiences to ensure that the product has a well-rounded analysis.

Iterative Improvement Through Evaluation

The AI Evals course advocates for a lifecycle approach to evaluation, emphasizing the importance of continuous monitoring and improvement. Similarly, product evaluation should be an ongoing process that informs iterative improvements to the product.

By regularly evaluating the product and incorporating user feedback, product teams can identify and address issues, add new features, and improve the overall user experience. This iterative approach helps to ensure that the product remains relevant, competitive, and aligned with user needs.

Afterwards, the product should be immediately tested again. It is important to ensure that the changes are successful and that there are no new problems that have come of it.

Charles Frye

Charles Frye, like many professionals, benefits from ongoing evaluation and assessment of his skills and performance. The principles of AI evaluation, focused on data-driven insights and continuous improvement, apply equally well to personal and professional development.

Setting Goals and Measuring Progress

The AI Evals course emphasizes the importance of setting clear goals and measuring progress towards those goals. Similarly, charles frye can benefit from setting professional development goals and tracking his progress over time.

By establishing specific, measurable, achievable, relevant, and time-bound (SMART) goals, Charles can focus his efforts on areas where he wants to improve and track his progress toward achieving those goals. This could involve taking courses, attending workshops, or seeking mentorship from experienced professionals.

This could also include getting feedback from others in the professional community. Feedback from peers, subordinates, and superiors may be helpful.

Identifying Strengths and Weaknesses

AI evaluation involves identifying the strengths and weaknesses of a system. Similarly, charles frye can benefit from identifying his strengths and weaknesses through self-assessment, feedback from peers, and performance reviews.

By understanding his strengths and weaknesses, Charles can focus on leveraging his strengths and addressing his weaknesses through training, practice, and mentorship. This will enable him to become a more well-rounded and effective professional.

Take these opinions and suggestions and act on applying them in the professional sphere.

Continuous Learning and Adaptation

The AI Evals course highlights the importance of continuous learning and adaptation. Similarly, charles frye should embrace a mindset of continuous learning and be willing to adapt to new technologies, trends, and challenges in his field.

This can involve reading industry publications, attending conferences, taking online courses, and experimenting with new tools and techniques. By staying up-to-date with the latest developments, Charles can remain competitive and relevant in his field.

This also includes improving on how he does things. Technology is ever changing, so a method from ten years ago may no longer be the best way to handle situations.

Hamel Community Building

Hamel community building, referring to efforts led by Hamel Husain or inspired by his approach, can benefit from the data-driven evaluation principles taught in the AI Evals course. Building a thriving community requires understanding member needs, measuring engagement, and continuously improving the community experience.

Measuring Community Engagement

The AI Evals course emphasizes the importance of measuring system performance. Similarly, hamel community building efforts require tracking key metrics such as membership growth, participation rates, content consumption, and member satisfaction.

By monitoring these metrics, community organizers can gain insights into what is working well and what areas need improvement. This data-driven approach enables them to make informed decisions about community initiatives and resource allocation.

Surveys, focus groups, and feedback forms are all useful tools to measure engagement. Furthermore, you can use these engagement methods to ensure that all members are equally heard and get the access they need.

Fostering a Positive Community Culture

AI evaluation often involves assessing the quality and trustworthiness of a system. Similarly, hamel community building requires fostering a positive and supportive community culture that encourages collaboration, respect, and inclusivity.

This can involve establishing clear community guidelines, moderating discussions, promoting positive interactions, and addressing any instances of harassment or discrimination. A positive community culture fosters trust, encourages participation, and enhances the overall community experience.

A positive community can improve the community engagement, too. It can snowball into a more valuable community.

Iterating on Community Initiatives Based on Feedback

The AI Evals course advocates for a lifecycle approach to evaluation, emphasizing the importance of continuous monitoring and improvement. Similarly, hamel community building efforts should involve regularly soliciting feedback from community members and using that feedback to iterate on community initiatives.

This can involve conducting surveys, hosting feedback sessions, and monitoring discussions to identify areas where the community can be improved. By actively listening to community members and incorporating their feedback, organizers can ensure that the community remains relevant, engaging, and aligned with member needs.

This cycle of continued improvement ensures that the community thrives.

George Siemens

Like charles frye, george siemens as an academic and thought leader, continuously evaluates and refines his theories and approaches. The data-driven evaluation principles from the AI Evals course are relevant to assessing the impact and effectiveness of his work in areas such as connectivism and learning analytics.

Assessing the Impact of Connectivism

George Siemens is known for his work on connectivism, a learning theory that emphasizes the importance of networks and connections in the learning process. Evaluating the impact of connectivism involves measuring its effectiveness in promoting learning outcomes, fostering collaboration, and supporting knowledge sharing.

This can involve conducting research studies, analyzing learning data, and gathering feedback from educators and learners who have adopted connectivist approaches. By assessing the impact of connectivism, Siemens can further refine and develop the theory.

One method of ensuring impact involves making it accessible to a wide base of users.

Evaluating the Use of Learning Analytics

George Siemens has also been involved in the development and application of learning analytics, which involves using data to improve learning outcomes and educational practices. Evaluating the effectiveness of learning analytics requires measuring its impact on student performance, engagement, and retention.

This can involve analyzing student data, conducting experiments, and gathering feedback from educators and administrators who are using learning analytics tools. By assessing the effectiveness of learning analytics, Siemens can help to guide the development and implementation of these tools in ways that maximize their impact.

Learning analytics may also be subject to bias, so it is important to take those concerns into consideration.

Iterating on Theories and Approaches Based on Evidence

The AI Evals course highlights the importance of iterating on systems based on evidence. Similarly, george siemens can benefit from iterating on his theories and approaches based on empirical evidence and feedback from practitioners.

This can involve conducting research studies, analyzing data, and engaging in dialogue with other experts in the field. By continuously evaluating and refining his work, Siemens can ensure that it remains relevant, effective, and aligned with the needs of learners and educators.

The only way to ensure that his theories remain useful and applicable is to test their theories in today’s modern technological society.

Ratchet Walkthrough

A ratchet walkthrough, in the context of a specific software or system, involves a systematic exploration of its features and functionalities. The AI evaluation principles, emphasize careful testing and error analysis, can guide a more thorough and effective walkthrough process.

Creating a Comprehensive Test Plan

The AI Evals course emphasizes the importance of creating a comprehensive test plan. Similarly, a ratchet walkthrough should be guided by a detailed test plan that outlines the specific features and functionalities to be tested, the test cases to be executed, and the expected outcomes.

The test plan should cover all aspects of the software or system, including usability, functionality, performance, security, and accessibility. By following a comprehensive test plan, testers can ensure that they are thoroughly exploring the system and identifying potential issues.

Document what you test and how you test it accordingly. It will be helpful to others who are doing similar tests as well.

Documenting Findings and Reporting Issues

The AI Evals course stresses the importance of documenting findings and reporting issues. Similarly, during a ratchet walkthrough, testers should carefully document all findings, including any errors, bugs, usability issues, or performance problems.

These findings should be reported to the development team in a clear and concise manner, along with detailed information about how to reproduce the issues. By documenting findings and reporting issues effectively, testers can help the development team to quickly identify and resolve problems.

The more complete, clear, and organized is the report, the greater the success the product will have.

Iterative Testing and Refinement

The AI Evals course advocates for an iterative approach to testing and refinement. Similarly, a ratchet walkthrough should be conducted iteratively, with testers providing feedback to the development team after each iteration.

The development team can use this feedback to make improvements to the software or system before the next iteration of testing. By iterating on the testing and refinement process, the development team can ensure that the software or system meets the required quality standards.

The cyclical approach ensures that bugs are fixed and caught early.

Payne Tech Application

The payne tech application, like any software application, requires thorough evaluation to ensure its functionality, usability, and performance. The principles of AI evaluation, with their emphasis on data-driven assessment and continuous improvement, can be applied to enhance the application’s quality.

Evaluating Application Functionality

The AI Evals software often measures the overall functionality of a system. Similarly, the payne tech application should be thoroughly evaluated to ensure that all of its features and functions are working as intended.

This can involve testing the application with a variety of inputs and scenarios, and comparing the results to the expected outcomes. Any discrepancies or errors should be documented and reported to the development team for resolution.

Ensuring that each feature works seamlessly is critical for any app or system to be successful.

Assessing User Experience

The AI Evals course highlights the importance of assessing user experience. Similarly, the payne tech application should be evaluated for its usability, intuitiveness, and overall user experience.

This can involve conducting usability testing with a representative sample of users and gathering feedback on their experiences. Any issues related to usability or user experience should be addressed in order to improve the application’s overall appeal and effectiveness.

The application should be seamless, intuitive, and useful.

Performance Testing and Optimization

The AI Evals course emphasizes the importance of performance testing and optimization. Similarly, the payne tech application should be evaluated for its performance under various load conditions.

This can involve conducting load testing to assess the application’s ability to handle a large number of concurrent users, and stress testing to determine its breaking point. Any performance bottlenecks should be identified and addressed in order to ensure that the application is able to handle the expected user load.

It is important the system is tested under varying conditions because users often have different user capacities and uses of it. Furthermore, it is important to know its breaking point to understand safety and risk factors.

Subjective Judgement

Subjective judgement plays a role in many evaluation processes, including AI evaluation, academic grading, and performance reviews. However, it is important to supplement subjective judgement with objective data and metrics to ensure fairness, consistency, and accuracy.

The Limitations of Subjective Judgement

While subjective judgement can provide valuable insights and perspectives, it is not without its limitations. Subjective judgements can be influenced by personal biases, emotions, and experiences, which can lead to inconsistencies and inaccuracies.

In addition, subjective judgments can be difficult to defend or justify, as they are often based on gut feelings or intuition rather than objective criteria. For these reasons, it is important to supplement subjective judgments with objective data and metrics.

This includes ensuring that subjectivity does not play a disproportionate role in decision-making.

Incorporating Objective Data and Metrics

The AI Evals course emphasizes the importance of incorporating objective data and metrics into the evaluation process. Similarly, when making judgments that involve subjective judgement, it is important to collect and analyze objective data to support those judgments.

This can involve gathering data on performance, behavior, or outcomes, and using that data to inform the decision-making process. By incorporating objective data and metrics, evaluators can reduce the influence of personal biases and make more informed and defensible decisions.

It is also important to be aware of any assumptions and biases that may impact the decision-making process.

Striving for Fairness and Consistency

Regardless of whether subjective or objective factors are valued more in a scenario, the principle of fairness and consistency must always be considered.

Even when subjective judgments are necessary, it is important to strive for fairness and consistency. This can involve establishing clear criteria for making judgments, providing training for evaluators, and implementing processes for reviewing and appealing decisions.

The overall goal is to minimize the impact of personal biases and ensure that all individuals are treated fairly and equitably.

Eval Helper

An eval helper, a software tool designed to assist in the systematic evaluation of AI systems, embodies the principles taught in the AI Evals course. This tool can automate tasks, provide insights, and help ensure that evaluations are comprehensive and data-driven.

Automating Evaluation Tasks

One of the key benefits of an eval helper is its ability to automate many of the tasks involved in AI evaluation. This can include data collection, data analysis, metric calculation, and report generation.

By automating these tasks, evaluators can save time and resources, and focus their attention on more strategic aspects of the evaluation process. Automation can also help to reduce the risk of human errors and ensure that evaluations are conducted consistently.

The automated system can be improved over time, too.

Providing Data-Driven Insights

An eval helper can also provide valuable data-driven insights into the performance of AI systems. This can include identifying patterns, trends, and anomalies in the data, and generating visualizations that help evaluators to understand the results of their evaluations.

By providing these insights, an eval helper can help evaluators to identify areas where the AI system excels and areas where improvements are needed. This information can then be used to guide the development and deployment of the AI system.

The system can be improved over time as well. User feedback can improve the insights and ensure overall success.

Ensuring Comprehensive Evaluations

An eval helper can also help to ensure that evaluations are comprehensive and cover all relevant aspects of the AI system. This can involve providing templates for test plans, tracking the execution of test cases, and generating reports that summarize the results of the evaluations.

By ensuring that evaluations are comprehensive, an eval helper can help evaluators to identify potential risks and issues that might otherwise be overlooked. Having comprehensive evaluations ensures that the systems and processes are fully examined.

Lesson 8 Homework

Lesson 8 homework, within the context of the “AI Evals For Engineers & PMs” course, provides students with hands-on practice in applying the concepts and methodologies learned in the course. Evaluating the quality and effectiveness of this homework is crucial for ensuring that students are mastering the material.

Assessing Comprehension of Key Concepts

The primary goal of lesson 8 homework is to assess students’ comprehension of the key concepts taught in the course. This can involve grading their responses to questions, analyzing their code, and evaluating their problem-solving skills.

The homework should be designed to challenge students to apply what they have learned to real-world scenarios, and to demonstrate their understanding of the underlying principles of AI evaluation. By assessing their comprehension of key concepts, instructors can identify areas where students may need additional support.

It’s important to check their answers because the students may be confused and need extra guidance.

Evaluating Practical Application Skills

In addition to assessing comprehension of key concepts, lesson 8 homework should also evaluate students’ practical application skills. This can involve assigning tasks that require students to design and implement evaluation methodologies, analyze data, and generate reports.

The homework should provide students with opportunities to develop their skills in using various tools and techniques for AI evaluation. By evaluating their practical application skills, instructors can assess whether students are able to translate their theoretical knowledge into practical skills.

If there are errors, why did the student make the error?

Providing Constructive Feedback

Regardless of their success, it is important to provide students with constructive feedback on their lesson 8 homework. This can involve providing written comments on their work, meeting with them individually to discuss their performance, and providing them with opportunities to ask questions and receive clarification.

The feedback should be tailored to each student’s individual needs and strengths and weaknesses. By providing constructive feedback, instructors can help students to improve their skills and achieve their learning goals.

Constructive feedback helps them learn from their mistakes.

Frye Building

The frye building, as a physical space, can be evaluated for its functionality, accessibility, and overall suitability for its intended purpose. The principles of evaluation, focused on data-driven assessment and continuous improvement, apply equally well to assessing the built environment.

Assessing Functionality and Usability

The evaluation should consider factors such as the layout of the building, the flow of traffic, the availability of amenities, and the suitability of the space for its intended use. User feedback can be an invaluable tool to evaluate the building.

Any issues related to functionality or usability should be addressed in order to improve the overall experience for occupants.

How might the building evolve over time to ensure its continued utility and benefit?

Evaluating Accessibility for All Users

Accessibility is a critical aspect of any building evaluation, and the frye building should be assessed for its accessibility for all users, including those with disabilities. This can involve evaluating the presence of ramps, elevators, accessible restrooms, and other accessibility features.

Consult with accessibility experts to consider and measure these features. Any deficiencies in accessibility should be addressed in order to ensure that the building is welcoming and usable for all.

What features specifically target helping groups who often face obstacles from accessibility standpoints?

Sustainability Assessment

The sustainability assessment of the frye building involves evaluating its energy efficiency, resource consumption, and environmental impact. This can involve measuring the building’s energy consumption, water usage, and waste generation, and comparing those data to industry benchmarks.

Any opportunities to reduce the building’s environmental impact should be identified and implemented.

How can you improve the building’s sustainability rating?

Maven Engineers

Maven engineers, like all professionals, benefit from ongoing evaluation and assessment of their skills and performance. The principles of AI evaluation, focused on data-driven insights and continuous improvement, apply equally well to professional development.

Performance Reviews and Feedback

Regular performance reviews and feedback are essential for maven engineers to track their progress, identify areas for improvement, and receive guidance from their managers. Performance reviews should be based on clear and objective metrics, and should provide actionable feedback that engineers can use to improve their skills and performance.

In addition, feedback should be solicited from peers, clients, and other stakeholders to provide a more well-rounded perspective. Performance and feedback helps promote an overall growth mindset and ensure success.

What skills do they possess? Where do they excel?

Skill Assessments and Training

Skill assessments can be used to identify areas where maven engineers may need additional training or development. These assessments can be tailored to the specific skills required for their roles, and can be used to track their progress over time.

Training programs can be designed to address any skill gaps that are identified, and to provide engineers with opportunities to develop new skills and stay up-to-date with the latest technologies. A combination of hard and soft skills assessment is helpful to engineers.

How can you best assist Maven Engineers in skill assessments? What technologies and processes work well?

Continuous Learning and Improvement

The AI Evals course highlights the importance of continuous learning and improvement. Similarly, maven engineers should be encouraged to embrace a mindset of continuous learning and be willing to adapt to new technologies, trends, and challenges in their field.

This can involve reading industry publications, attending conferences, taking online courses, and experimenting with new tools and techniques. By staying up-to-date with the latest developments, engineers can remain competitive and relevant in their field.

It’s important to always be curious and ask “why does this work?” and “how can I improve this process?”

Eval Software

Eval software, designed to streamline and enhance the evaluation process, is a direct application of the principles taught in the AI Evals course. Effective eval software automates tasks, provides insights, and helps ensure that evaluations are comprehensive and data-driven.

Automating Data Collection and Analysis

One of the key benefits of eval software is its ability to automate the collection and analysis of data. This can involve collecting data from various sources, such as surveys, databases, and APIs, and using that data to calculate key metrics and generate reports.

By automating these tasks, evaluators can save time and resources, and focus their attention on more strategic aspects of the evaluation process. The key is to fully embrace the software to leverage the data analytics available which improves the evaluation and assessment process.

Ensure the security of the data collected is paramount and must be strongly considered.

Providing Real-Time Insights

Eval software can also provide real-time insights into the performance of the systems, processes, or individuals being evaluated. This can involve generating dashboards, visualizations, and alerts that help evaluators to quickly identify trends, patterns, and anomalies.

Real-time insights can enable evaluators to take immediate action to address any issues or opportunities that are identified. Real-time insights can be particularly useful for tracking progress towards goals, monitoring performance, and identifying potential risks.

How can individuals utilize and better respond to real-time insights?

Ensuring Traceability and Accountability

Eval software can help to ensure that evaluations are traceable and accountable. This can involve tracking all of the steps involved in the evaluation process, from data collection to report generation, and providing an audit trail that shows who did what and when.

By ensuring traceability and accountability, eval software can help to build trust in the evaluation process and ensure that decisions are based on reliable and accurate information. The evaluations must be reproducible.

How does the software help the various stakeholders?

Hamel Husain

Hamel Husain, with his extensive experience in AI and machine learning, exemplifies the importance of continuous evaluation and refinement in the development of AI systems. His expertise and insights from the AI E*Hamel Husain*, with his extensive experience in AI and machine learning, exemplifies the importance of continuous evaluation and refinement in the development of AI systems. His expertise and insights from the AI Evaluation landscape provide a framework that professionals in the field can utilize to enhance their own work.

The Role of Feedback in AI Development

In the realm of AI, feedback is not merely a formality but an essential ingredient for success. Hamel emphasizes the value of incorporating user feedback into every iteration of AI system development. This means actively listening to end-users and stakeholders who interact with the models and algorithms. By understanding their experiences, developers can pinpoint areas where the AI may be falling short or could be enhanced.

This feedback loop fosters an environment of subjective judgement, where opinions and personal experiences are converted into actionable insights. Developers should embrace this input, as it can ultimately lead to more intuitive and user-friendly products. How well the AI aligns with user needs and preferences often determines its ultimate success.

Where can organizations best implement feedback mechanisms to improve AI systems? What channels facilitate seamless communication?

Agile Methodologies in AI Projects

Hamel advocates for adopting agile methodologies in AI projects, which focus on iterative development, flexibility, and responsiveness to change. In a rapidly evolving field like AI, traditional linear approaches can quickly become outdated. Instead, agile frameworks allow teams to adapt to new findings, incorporate user feedback, and continuously refine their solutions.

Agile practices—such as sprints and regular retrospectives—enable teams to evaluate their progress regularly and make real-time adjustments. This leads to faster deployment times and reduces the risk of working with obsolete technology or failing to meet user expectations.

What specific agile practices can be most beneficial for AI development? How can teams maintain agility in a structured environment?

Collaborative Innovation

Collaboration is another principle championed by Hamel. He believes that the future of AI will hinge on how well professionals from various disciplines can work together to innovate and solve complex problems. By fostering a collaborative environment, diverse perspectives can be integrated into the development process, leading to more robust AI applications.

Moreover, collaboration encourages knowledge sharing, which can combat the silos often found in tech environments. When engineers, product managers, and users alike contribute their insights, the resulting AI systems can be more versatile and resilient, catering to a wider range of use cases.

How can organizations promote a culture of collaboration within their AI teams? What tools and platforms facilitate effective teamwork?

Workshop Evaluation Questions

Effective evaluations of workshops require carefully crafted questions that elicit meaningful responses from attendees. These questions should aim to gauge the overall effectiveness of the workshop while providing insights into what worked well and what could be improved for future sessions.

Crafting Effective Questions

When designing workshop evaluation questions, clarity is key. Questions should be straightforward and easy to understand to avoid confusion. Open-ended questions can provide rich qualitative data, allowing participants to express their thoughts and experiences in detail. For example, asking “What was your favorite aspect of the workshop?” invites personal reflection and richer feedback than simply using a Likert scale.

Conversely, closed-ended questions can help quantify specific metrics, such as satisfaction levels or relevance of content, making it easier to analyze overall trends. A mix of both types of questions is often the best approach to obtain a comprehensive view of participant experiences.

How can facilitators ensure all voices are heard during evaluations? What methods can increase response rates?

Importance of Timeliness

The timing of evaluations plays a critical role in their effectiveness. Conducting evaluations immediately after the workshop allows participants to recall their experiences while they are still fresh. This immediacy can lead to more accurate and detailed feedback compared to evaluations conducted weeks later, when memories may fade.

Additionally, facilitating follow-up evaluations can also provide insight into the long-term impact of the workshop, helping organizers understand whether the objectives were met and how the content has been applied since the session.

What strategies can keep participants engaged in the evaluation process? How can follow-up communications reinforce the workshop’s value?

Analyzing and Implementing Feedback

Once evaluation data are collected, the next step involves analyzing the responses and implementing changes based on the feedback received. This can involve identifying common themes, strengths, and weaknesses noted by participants. Only through careful analysis can facilitators recognize patterns and prioritize actionable improvements for future workshops.

Communicating back to participants about how their feedback influenced changes can also enhance engagement and foster a culture of continuous improvement. Participants appreciate seeing their voices reflected in the evolution of workshop offerings.

How can facilitators create a feedback loop that encourages ongoing participation? What tools can simplify the analysis process?

Conclusion

In summation, these discussions highlight the multifaceted nature of evaluation across different domains, from assessing the accessibility and sustainability of the Frye Building to scrutinizing the ongoing learning processes for Maven Engineers and the strategic insights provided by Eval Software. The principles established by figures like Hamel Husain underscore the transformative power of continuous feedback, collaboration, and technology in fostering growth and innovation. Overall, whether it’s through crafting reflective workshop evaluation questions or implementing agile methodologies in AI development, the essence remains focused on improving outcomes through deliberate and thoughtful assessment practices.

Sales Page:_https://maven.com/parlance-labs/evals

Delivery time: 12 -24hrs after paid

Reviews

There are no reviews yet.